Digital twins in manufacturing refer to continuously executing orchestration platforms within manufacturing environments that enable real-time coordination, system-level reasoning, and industrial execution across production assets and processes. Graph technology is especially suited to modeling and analyzing the highly networked nature of complex manufacturing operations with connected assets, material flows, and dependencies within and between manufacturing sites.

Advances in the technical capacity to collect, transmit, and analyze vast amounts of data in real time have made it possible to build digital twins at scale. These digital twins operate as active execution systems, maintaining state, propagating change, and supporting closed-loop decision-making in complex industrial operations. This article explores the challenges and opportunities in developing robust digital twins for manufacturing environments.

Digital Twin Technology in Manufacturing Systems

Digital twin technology in manufacturing is no longer defined solely by visualization or simulation. In advanced industrial environments, a digital twin functions as a continuously executing system abstraction that synchronizes physical operations, computational models, and decision logic in real time. Its purpose is not merely to observe production systems, but to reason about them, anticipate system behavior, and influence operational outcomes.

At this level of maturity, digital twins operate within the manufacturing control fabric, integrating operational technology (OT), enterprise systems, and analytics into a coherent execution layer. Their value emerges not from isolated insights but from their ability to maintain state, propagate change, and coordinate decisions across interconnected assets and processes.

Core Principles of Digital Twins in Manufacturing Systems

Enterprise-grade digital twins used in manufacturing systems are governed by several foundational principles that distinguish them from traditional monitoring or modeling tools.

Persistent system state

A digital twin maintains a continuously evolving representation of the physical system’s state. Unlike simulations that reset between runs, the twin preserves historical context, operational conditions, and transitional states, enabling longitudinal analysis and informed decision-making.

Bidirectional synchronization

Data flow in advanced twins is not one-way. Physical systems update the twin in real time via telemetry, while the twin can influence physical behavior via control signals, optimization recommendations, or automated interventions.

Executable models

The twin is built on models that can be executed at runtime. These models encode system behavior, constraints, and logic, allowing the twin to simulate future states, evaluate alternatives, and enforce rules under live operating conditions.

Closed-loop control

Rather than serving as a passive observer, the twin participates in closed feedback loops. It detects deviations, evaluates impact, and triggers corrective actions either autonomously or in coordination with human operators.

Together, these principles elevate the digital twin from a representational artifact to an active operational system.

Digital Twin vs. Simulation vs. 3D Models

Although often conflated, digital twins, simulations, and 3D models serve fundamentally different roles in manufacturing systems.

Simulations are typically scenario-based and deterministic. They operate on predefined inputs, execute offline or in batch mode, and produce results that inform planning or design decisions. Once completed, they do not retain state or adapt to live conditions.

3D models focus on geometric representation. They provide spatial context and visual fidelity but lack behavioral logic, temporal awareness, and operational coupling to real-world systems.

Digital twins, by contrast, are stateful, continuously synchronized, and behaviorally expressive. They integrate geometry, logic, and live data into a single executable representation that evolves alongside the physical system. This allows them to support runtime decision-making rather than retrospective analysis.

The distinction becomes critical in manufacturing environments where conditions change continuously, and decisions must be made under uncertainty and time pressure.

Digital Twin vs. Digital Thread

The digital thread and the digital twin are complementary but not interchangeable concepts.

The digital thread focuses on information continuity. It ensures that data generated across the product lifecycle, design, engineering, manufacturing, operations, and servicing remains traceable, consistent, and accessible. Its primary function is lineage: understanding where data originated, how it evolved, and how it connects across systems.

The digital twin, in contrast, focuses on operational intelligence and execution. It consumes data from the digital thread and goes a step further by interpreting it in real time, evaluating system behavior, and supporting or automating decision-making.

In advanced manufacturing architectures, the digital thread provides the historical and structural backbone, while the digital twin functions as the live reasoning layer, bridging past design intent with present operational reality.

Evolution of Digital Twins in Industrial Engineering

Digital twins did not emerge as a discrete innovation, but as the result of a long-term convergence between systems engineering, industrial automation, data infrastructure, and computational modeling. Understanding this evolution is essential for advanced practitioners, not as historical background, but as a way to recognize why modern digital twins are architected the way they are, and where their current limitations originate.

What distinguishes mature digital twin initiatives today is not novelty, but architectural lineage: each generation of twins reflects both the capabilities and constraints of the technological paradigms that preceded it.

Systems Engineering Origins and Early Cyber-Physical Models

The conceptual foundations of digital twins can be traced back to systems engineering practices developed in the aerospace and defense industries during the mid-20th century. These domains required rigorous modeling of complex, tightly coupled systems operating under extreme conditions, where failure analysis and mission continuity were critical.

Early “twin-like” constructs consisted of physical replicas and deterministic computational models used to simulate specific scenarios, often manually configured and executed. While these models lacked real-time data integration, they introduced several enduring principles: system decomposition, dependency modeling, and behavioral abstraction.

Crucially, these early cyber-physical models were engineered artifacts, not data-driven ones. Their accuracy depended on expert knowledge, predefined assumptions, and controlled operating conditions. This determinism made them reliable within narrow bounds, but brittle in the face of variability, an issue that would later become central in manufacturing environments.

Digital Twins in the Industry 4.0 Era

The transition from engineered replicas to operational digital twins accelerated with the rise of Industry 4.0. Manufacturing systems became increasingly instrumented, interconnected, and data-rich, exposing the limitations of traditional automation architectures built around fixed logic and siloed control layers.

Several converging developments reshaped the landscape:

- Ubiquitous sensing and connectivity, enabling continuous telemetry from machines, lines, and facilities

- Standardized industrial communication protocols, allowing heterogeneous equipment to participate in shared data ecosystems

- Enterprise system integration, connecting shop-floor events with planning, quality, and supply chain systems

- Commercial maturity of graph-based solutions, providing native encoding and retrieval of the complex relationships among machines, processes, and materials

Within this context, digital twins began to serve as integration and orchestration layers, rather than isolated analytical tools. Their role expanded from post hoc analysis to real-time coordination, linking operational data, engineering models, and business objectives.

However, many early Industry 4.0 implementations still treated twins as monitoring dashboards or advanced analytics projects, rather than as executable system abstractions. This limited their ability to drive sustained operational change.

From Monitoring Tools to Decision Systems

The most significant shift in the evolution of digital twins in industrial and manufacturing environments has been their transition from descriptive representations to decision-capable systems.

As manufacturing environments grew more volatile, driven by product variability, supply chain disruption, energy constraints, and labor dynamics, static optimization strategies proved insufficient. Digital twins began incorporating real-time evaluation logic, scenario comparison, and automated response mechanisms.

Modern twins increasingly support:

- Runtime intervention, adjusting parameters or workflows based on live conditions

- Adaptive optimization, recalculating strategies as constraints evolve

- System-level reasoning, evaluating the downstream impact of local changes

This evolution reframes the digital twin as a continuous decision environment rather than a reporting interface. The twin does not merely reflect what is happening; it evaluates what should happen next, given competing objectives and operational constraints.

At this stage of maturity, the effectiveness of a digital twin depends less on raw data volume and more on model expressiveness, dependency awareness, and the ability to propagate change across interconnected systems; all of which are naturally supported by graph-based representations that can encode and traverse complex manufacturing dependencies in real time.

Core Technologies Behind Enterprise-Grade Digital Twins

Enterprise-grade digital twins are built on a converged execution architecture. Their effectiveness depends on how well the layers of sensing, modeling, computation, intelligence, security, and visualization are integrated into a coherent, state-aware system. These layers must function as an interdependent whole, where decisions in one layer directly influence behavior and reliability across the system. Graph-based approaches provide the context and connections that tie these capabilities together through explicit relationships and constraints within and across these layers of concern.

At advanced maturity levels, architectural choices in each layer determine the digital twin’s ability to scale, reason, and act under real-world constraints. Weaknesses introduced at the sensing, modeling, or execution layers propagate upward, limiting the twin’s operational authority and undermining trust in its decisions. As a result, enterprise digital twins must be designed as execution systems first, not as collections of analytical or visualization components.

Rather than replacing existing operational, analytical, or enterprise systems, digital twins serve as a unifying layer for execution and reasoning. They consume signals from IoT platforms, manufacturing execution systems, enterprise applications, and analytics tools, maintain system state across these domains, and enable dependency-aware decision-making without duplicating or displacing underlying infrastructure. This positioning allows organizations to preserve proven systems while introducing real-time coordination, system-level reasoning, and closed-loop execution capabilities.

IoT Infrastructure, Sensing Strategy, and Data Fidelity

The sensing layer defines the boundary between physical reality and its digital abstraction. In advanced digital twin systems, the objective is not to maximize data collection, but to acquire decision-relevant signals at the appropriate resolution and cadence. Excessive instrumentation can introduce noise, inflate cost, and strain bandwidth, while insufficient sensing limits observability and weakens behavioral models. Mature digital twin architectures, therefore, align sensor granularity with decision criticality, prioritizing fidelity where operational risk, safety constraints, or optimization potential are highest. Likewise, execution authority may also be distributed within the system to ensure that critical real-time safety concerns are still addressed locally with, rather than waiting for data to propagate to a more remote decision layer. Optimization decisions may be made remotely, where appropriate data can be gathered, and then new directives can be propagated back to local closed-loop controllers.

Raw sensor data rarely flows into enterprise systems unchanged. Production-grade digital twins employ a combination of edge preprocessing and centralized ingestion to balance responsiveness with system-wide visibility. Edge-level filtering, aggregation, and anomaly flagging reduce latency and data volume while enabling localized autonomy for time-sensitive decisions. Centralized ingestion layers, in contrast, support cross-asset correlation, historical analysis, and strategic optimization. Practical implementations adopt hybrid execution strategies that explicitly define which transformations occur at the edge and which are deferred to centralized platforms.

Without semantic context, even high-quality sensor data remains operationally brittle. Advanced digital twins enforce semantic tagging that links measurements to assets, processes, and system states, ensuring consistent interpretation across engineering, operations, and analytics layers. Temporal consistency is equally critical: measurements from distributed sources must remain synchronized in time to preserve causal validity. By maintaining strict alignment across data streams, digital twins ensure that correlations reflect real system behavior rather than artifacts of sampling delay or clock drift.

Digital Modeling and Executable Virtual Representations

At the core of the digital twin lies its executable model, not merely a descriptive artifact, but an executable representation that evolves continuously as system state changes. In advanced manufacturing environments, models function as living system abstractions that encode behavior, constraints, and decision logic rather than static documentation.

Modern digital twins rely on multiple modeling paradigms to balance accuracy, scalability, and robustness. Physics-based models encode first-principles behavior and offer high interpretability in well-understood domains, though they require careful calibration and can become computationally expensive at scale. Data-driven models infer behavior from operational history and handle variability effectively, but demand strong governance to mitigate opacity, bias, and model drift as conditions evolve.

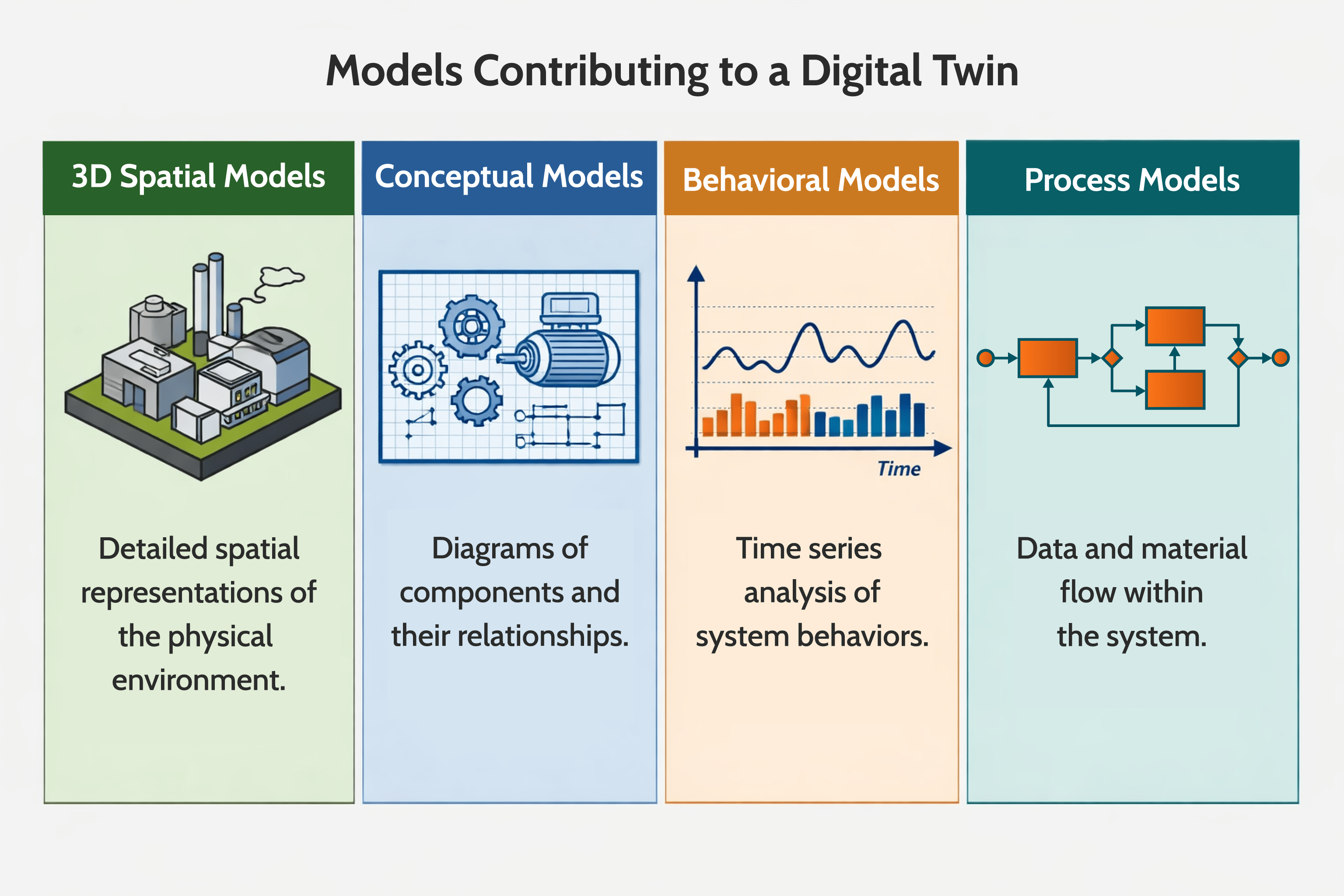

Digital twins are made up of many different models that describe different aspects of how the elements of the twin work together. Spatial models, conceptual models, behavior models and process models are all needed to complete a functional digital twin.

To address these limitations, mature digital twin architectures increasingly adopt hybrid and probabilistic modeling approaches. By combining deterministic structure with learned or stochastic components, these models remain resilient under uncertainty while preserving constraint enforcement and interpretability.

Beyond numeric prediction, enterprise-grade digital twins must also reason explicitly about system state and behavior over time. State machines and temporal logic enable the twin to encode permissible transitions, enforce sequencing rules, and evaluate the impact of events as they unfold, capabilities essential for runtime decision-making, exception handling, and safe rollback in live manufacturing environments.

Cloud–Edge Execution Architectures

Scalable digital twins rarely operate exclusively in the cloud or at the edge. Instead, advanced implementations adopt tiered execution architectures that distribute responsibility across multiple computational layers. This distribution reflects differing latency constraints, safety requirements, and decision scopes inherent in physical manufacturing systems.

Latency-sensitive decisions, such as those affecting physical motion, safety interlocks, or immediate fault response, must execute close to the source of action. Lightweight edge agents, therefore, enforce local constraints, respond to rapidly changing conditions, and maintain operational continuity even under partial connectivity loss. Cloud-based layers aggregate system-wide data, perform optimization across assets or lines, and support strategic coordination that can tolerate higher latency.

Architectural boundaries in cloud–edge systems are shaped as much by physical reality as by software design. Bandwidth limitations, network reliability, and deterministic response requirements impose hard constraints on where decisions can safely execute. Mature digital twin architectures explicitly define these control boundaries to ensure that time-critical actions remain local while higher-level reasoning benefits from global visibility.

Balancing decentralized autonomy with centralized orchestration is a core architectural challenge. Excessive centralization introduces latency and coordination bottlenecks, while excessive autonomy fragments system behavior and undermines coherence. Effective digital twins resolve this tension by clearly specifying which decisions are local and which are system-wide, and how conflicts are detected and resolved.

AI Integration for Predictive and Prescriptive Intelligence

Artificial intelligence transforms digital twins from reactive mirrors into anticipatory systems that evaluate future states and recommend actions. In enterprise manufacturing environments, its value lies not in model sophistication alone, but in how effectively intelligence is operationalized within live decision loops.

AI enables predictive maintenance by combining anomaly detection and time-series forecasting to identify early signs of degradation before failures occur. This allows proactive maintenance scheduling that reduces unplanned downtime while preserving asset life. Quality management also becomes forward-looking: by correlating process parameters with output characteristics in real time, digital twins can anticipate defects and recommend corrective adjustments before scrap is produced.

In dynamic environments, reinforcement learning supports adaptive scheduling and resource allocation as constraints and objectives evolve. These capabilities must be governed carefully. Enterprise-grade digital twins require explainable, traceable AI decisions, supported by versioning, performance monitoring, and human override to ensure that optimization does not compromise trust, safety, or compliance.

Cybersecurity as a Foundational Architectural Layer

As digital twins gain the ability to influence physical operations, they become critical attack surfaces. Security cannot be layered on top; it must be embedded throughout the architecture.

- Data provenance and integrity ensure that incoming signals are authenticated and protected against manipulation or spoofing, particularly in distributed sensing environments.

- Model governance prevents unauthorized changes to models, rules, or thresholds that could have real-world consequences.

- Zero-trust edge deployment requires continuous authentication, encrypted communication, and safe failure modes under partial compromise.

- Role-based and context-aware access ensures that visibility and control align with operational responsibility and situational context.

Graph-Based System Modeling and Visualization

In advanced digital twin architectures, the graph-based layer serves as an execution substrate, encoding dependencies, constraints, and state transitions.

As digital twins scale in complexity, relational structure becomes as essential as raw data. Manufacturing systems are defined not only by individual assets, but by the dependencies, flows, and causal relationships that connect them. Graph-based modeling provides a native abstraction for explicitly representing these relationships and enabling real-time system-level reasoning.

In a graph-based digital twin, machines, components, processes, and materials are represented as nodes, while physical, logical, and operational dependencies are represented as edges. This structure enables the twin to evaluate system behavior in context rather than as a set of disconnected signals. Impact analysis and root-cause investigation are performed via graph traversal, allowing the tracing of downstream effects of failures or configuration changes across interconnected assets and processes.

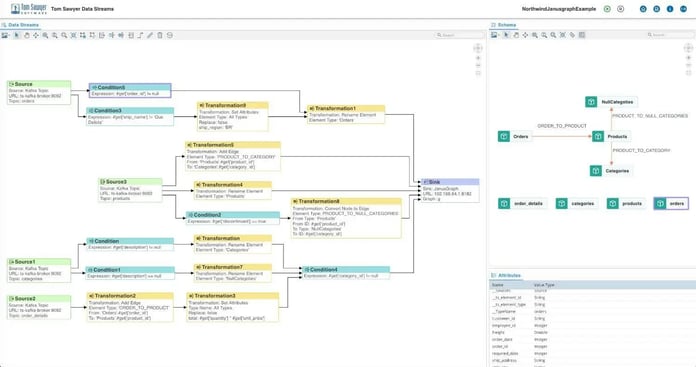

Graph-based data transformation diagram from Tom Sawyer Data Streams.

State propagation across the graph enables the digital twin to continuously evaluate how local changes affect the broader system, revealing cascading effects that are often delayed or non-obvious. Within this architectural layer, Tom Sawyer Software Data Streams supports continuous synchronization between operational events and the live graph model, ensuring that dependency visualization and impact analysis reflect the current system state rather than static snapshots.

By enabling real-time graph construction, traversal, and state propagation, this graph-based reasoning layer becomes a core execution mechanism, not a visualization add-on, connecting sensing, modeling, and decision logic into a unified operational abstraction.

Digital Twin Typologies in Manufacturing Environments

Digital twins in manufacturing do not exist as monolithic constructs. Mature implementations adopt layered, composable twin typologies, each addressing distinct scopes of abstraction, decision latency, and operational impact. Understanding these typologies is essential for designing scalable architectures that avoid over-modeling while preserving system-level insight.

Most advanced digital twins are systems of systems—each system performing its function within a specific scope of responsibility, and coordinating effectively with the other systems upstream and downstream. Rather than choosing a single type of twin, advanced organizations deploy hierarchies of interconnected twins, appropriate for the system they represent, each synchronized through shared state and dependency relationships.

Component-Level Digital Twins

Component-level digital twins represent the most granular abstraction in manufacturing environments. They are tightly coupled to individual parts or subassemblies and derive their behavior directly from sensor inputs.

These twins focus on sensor-coupled behavior models that capture localized phenomena, including vibration patterns, thermal stress, wear progression, and electrical characteristics. Their primary value lies in early anomaly detection and fine-grained condition assessment.

While component twins operate within narrow scopes, their outputs often feed higher-level twins. Accurate modeling of component behavior is therefore foundational; errors or latency at this level can propagate upward, distorting system-wide reasoning.

Asset and Equipment Twins

Asset-level twins aggregate multiple components into a coherent operational unit, such as a machine, robot, or production module. At this level, the twin begins to reflect functional behavior rather than isolated signals.

Key capabilities include:

- Lifecycle monitoring, tracking asset health across commissioning, operation, and maintenance phases

- Condition-based optimization, where operational parameters are adjusted dynamically based on asset state rather than fixed schedules

Asset twins often act as decision boundaries, determining when localized issues warrant intervention, escalation, or coordination with upstream and downstream processes.

System and Line-Level Twins

System-level twins model interactions across multiple assets, capturing interdependencies, constraints, and flow dynamics within production lines or manufacturing cells.

These twins enable:

- Bottleneck detection, identifying constraint points that limit throughput under varying conditions

- Flow optimization, balancing speed, quality, and resource utilization across interconnected assets

At this level, state changes must propagate across multiple entities in near-real time. Event-driven synchronization mechanisms become critical, especially in environments where production states evolve continuously. Graph-based solutions natively capture the upstream and downstream relationships that these critical signals need to travel.

Graph-based data pipelines and real-time state propagations, such as those enabled by Tom Sawyer Data Streams, support this synchronization by streaming operational changes directly into the system’s dependency model, ensuring that downstream reasoning reflects the current operational reality rather than delayed snapshots.

Process and Network Twins

Process and network twins represent the highest level of abstraction, spanning multiple production lines, facilities, or even entire manufacturing networks. Their purpose is not local optimization, but systemic coordination under variability and uncertainty.

Capabilities at this level include:

- Cross-plant coordination, aligning production strategies across geographically distributed facilities

- Supply chain coupling, linking manufacturing behavior with material availability, logistics, and demand signals

- Scenario simulation, evaluating the impact of disruptions, policy changes, or strategic shifts before execution

These twins depend heavily on continuous data flow and relational awareness. Static integrations are insufficient; instead, they require streaming architectures that maintain synchronized system state across domains, enabling real-time what-if analysis and coordinated response.

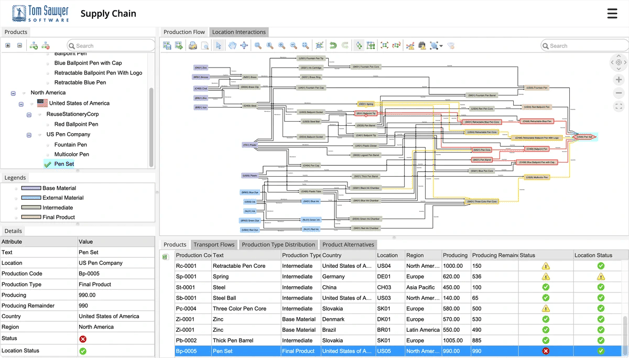

Tom Sawyer Software's Supply Chain demonstration showing the complex pathways and downstream impacts of disruptions in material flows.

At this scale, the digital twin becomes less a model of individual operations and more a decision fabric that integrates operational, logistical, and strategic perspectives into a unified execution layer.

Business and Operational Impact of Digital Twin Architectures

The true value of digital twin architectures emerges when they are embedded into operational workflows and decision loops. At enterprise scale, their impact is not measured by visualization quality or data volume, but by measurable changes in reliability, efficiency, and decision latency across manufacturing systems.

When properly implemented, digital twins shift organizations from reactive operations to anticipatory and adaptive execution, enabling performance improvements that static automation and analytics cannot sustain.

Predictive Maintenance and Failure Prevention

One of the most immediate operational impacts of digital twins is their ability to anticipate failures before they manifest as downtime.

Early anomaly detection

By continuously correlating sensor behavior with executable models, digital twins detect subtle deviations that traditional threshold-based systems miss. These deviations often indicate degradation patterns long before failure.

Maintenance prioritization

Rather than scheduling maintenance based on fixed intervals, digital twins evaluate asset health in context, considering operational criticality, downstream dependencies, and production schedules. This enables targeted interventions that minimize disruption while preserving asset integrity.

Accelerated Product and Process Engineering

Digital twins extend beyond operations into engineering, reducing the gap between design intent and production reality.

Virtual commissioning

Before physical deployment, processes, control logic, and equipment interactions can be validated in a digital environment. This reduces startup risk, shortens commissioning cycles, and uncovers integration issues early.

Design-for-operability

By incorporating operational feedback into engineering models, digital twins enable designs that account for maintainability, variability, and real-world constraints, reducing costly redesigns and late-stage adjustments.

Operational Efficiency and Throughput Optimization

In complex manufacturing systems, the greatest efficiencies are achieved by observing and orchestrating system-level interactions. It is not helpful to over-optimize the output of one step in production if the downstream components are not ready to receive that output.

Constraint-aware optimization

Digital twins identify actual system constraints by modeling dependencies and flow dynamics across assets and processes. Optimization efforts can then focus on high-impact leverage points rather than local maxima.

Dynamic reconfiguration

As conditions change due to shifts in demand, equipment availability, or material constraints, digital twins enable dynamic reconfiguration of production parameters and workflows, maintaining throughput without sacrificing quality.

Real-Time, Contextual Decision-Making

Operational decisions are only as good as the context in which they are made. Digital twins provide that context by unifying data, models, and relationships into a coherent decision environment.

System-wide visibility

Rather than isolated dashboards, digital twins offer integrated views of system state, making interactions and dependencies explicit across operational layers.

Causal understanding

By explicitly modeling relationships, twins enable reasoning about cause and effect, supporting decisions that account for downstream impacts rather than surface-level symptoms.

Cost Optimization and Measurable ROI

While digital twins are not designed to be cost-reduction tools, their operational effects translate directly into measurable financial outcomes.

Reduced downtime

Early detection, targeted maintenance, and coordinated response reduce both planned and unplanned downtime, often yielding immediate operational savings.

Energy and material efficiency

By continuously evaluating process performance, digital twins identify inefficiencies in energy use and material flow, enabling optimization strategies that align cost reduction with sustainability objectives.

Enterprise Digital Twin Implementation Blueprint

Implementing a digital twin at enterprise scale is not a linear deployment exercise, but an iterative architectural process that aligns operational objectives, system boundaries, data flows, and decision authority into a cohesive execution framework. The architectural principles, execution mechanisms, and operational impacts discussed throughout this guide define what advanced digital twins are capable of, but the realization of that potential depends on how deliberately these elements are scoped and governed.

Rather than treating digital twins as one-time deployments, mature organizations approach them as evolving infrastructure. Strategic objective definition, disciplined boundary selection, continuous synchronization with operational reality, and controlled model evolution determine whether architectural intent translates into sustained operational value. Implementation, in this context, is not a checklist, but an ongoing alignment between system behavior, decision logic, and organizational maturity.

Graph-based solutions can provide the flexibility that a complex digital twin requires to evolve smoothly over time, readily modeling new networks of connections through every layer of the digital twin for each new use case that the the digital twin grows to support.

Industry Applications of Digital Twins in Manufacturing

While the architectural foundations of digital twins remain consistent, their concrete implementation varies significantly across industries. Differences in regulatory pressure, system criticality, production variability, and lifecycle duration shape how digital twins are modeled, synchronized, and operationalized within manufacturing environments.

Advanced digital twin deployments are therefore best understood through industry-specific decision patterns, rather than generic use cases.

Automotive and Mobility

In automotive and mobility manufacturing, digital twins are primarily driven by high product variability and tightly coupled production lines. Frequent model changes, just-in-time supply chains, and stringent quality requirements create environments where static optimization quickly becomes obsolete.

Digital twins in this domain are used to:

- Coordinate complex assembly sequences across robotic and human-operated stations

- Detect and resolve bottlenecks caused by configuration variability

- Align production planning with real-time material availability

System- and line-level digital twins are particularly critical, as minor disruptions can cascade rapidly across interconnected processes.

Aerospace and Defense

Aerospace and defense manufacturing emphasizes system integrity, traceability, and lifecycle longevity. Production volumes are lower, but system complexity and regulatory constraints are significantly higher. The cost of failure of these systems is also higher, both during manufacturing and in operation.

Digital twins in this sector support:

- Configuration management across long-lived assets

- Verification of system behavior under extreme or non-standard conditions

- Impact analysis of engineering changes on downstream assembly and certification

- Improved awareness of asset health and reliability during operation

Here, digital twins span both manufacturing and operational phases, supporting end-to-end lifecycle visibility from production through in-service asset management.

Electronics and Semiconductor Manufacturing

Electronics and semiconductor manufacturing environments are characterized by extreme precision, rapid cycle times, and sensitivity to micro-variations in process conditions.

Digital twins are applied to:

- Monitor and optimize tightly controlled fabrication steps

- Correlate process parameters with yield and defect patterns

- Coordinate tool utilization across highly automated facilities

At this scale, digital twins must operate with low latency and high data fidelity, as even minor delays or modeling inaccuracies can translate into significant yield loss.

Smart Factories and Interconnected Production Networks

In smart factory environments, the emphasis shifts from individual production lines to networked coordination across facilities and functions.

Digital twins in these contexts enable:

- Cross-line and cross-plant synchronization

- Adaptive load balancing in response to demand or disruption

- Unified reasoning across production, logistics, and maintenance domains

These implementations rely heavily on explicit modeling of interdependencies, allowing decisions to be evaluated not just locally, but across the broader production ecosystem.

Energy and Utilities

Energy and utility manufacturing systems operate continuously and under strict reliability constraints. Downtime is costly, accidents are potentially deadly, and external factors, including demand fluctuations and regulatory requirements, often influence system behavior and available mitigation strategies.

Digital twins support:

- Real-time monitoring and optimization of production and distribution assets

- Scenario evaluation for load shifts, maintenance windows, and fault conditions

- Coordination between generation, storage, and distribution components

- Controlled containment of abnormal situations before negative consequences propagate

In these environments, the digital twin functions less as a planning aid and more as a real-time operational companion, continuously evaluating system state and guiding decision-making under uncertainty. They are vital in the coordination of a distributed set of human decision makers managing safety within the production constraints of refineries and power plants, for example. In these environments, as with a plane in flight, there is no “off button.” A safe landing requires a system-wide response and up-to-the minute awareness of system-level effects, under conditions that are outside of normal operating parameters.

About the Author

Caroline Scharf, VP of Operations at Tom Sawyer Software, has 15 years experience with Tom Sawyer Software in the graph visualization and analysis space, and more than 25 years leadership experience at large and small software companies. She has a passion for process and policy in streamlining operations, a solution-oriented approach to problem solving, and is a strong advocate of continuous evaluation and improvement.

AI Disclosure: This article was generated with the assistance of artificial intelligence and has been reviewed and fact-checked by Caroline Scharf and Liana Kiff.

FAQ

Do digital twins require replacing existing manufacturing systems?

Digital twins do not require replacing existing manufacturing systems. In mature architectures, they are introduced as an additional abstraction layer that operates above operational technology, manufacturing execution systems, enterprise platforms, and engineering tools. Rather than disrupting established infrastructure, the twin integrates with these systems through event streams, APIs, and semantic mappings. This allows organizations to preserve proven control and transactional layers while adding real-time reasoning and coordination capabilities without forcing large-scale system migrations.

It is important to note that many of the systems that are operating in the field today did not benefit from an integrated engineering-to-production design paradigm. They may not have readily available models of their architecture or their operation that can be easily or directly integrated with the twin. These legacy systems are often long-lived and expensive to replace.

It is rare not to encounter systems in a digital twin project that require manual intervention to address the gaps between documentation and operation, and the transfer of meaningful data in context for use by a digital twin. In such cases, trade-offs might need to be made about the degree to which the legacy system can be made to coordinate safely and effectively with the digital twin, and subsequently, the types of decision making and control it can support.

How do digital twins scale across plants and networks?

Scaling digital twins across plants and networks is achieved through hierarchical and federated design, rather than by extending a single centralized model. Advanced deployments decompose complexity by composing multiple interconnected twins, each responsible for a defined scope and synchronized through shared state and dependency relationships. Local twins retain autonomy for latency-sensitive decisions, while higher-level twins coordinate optimization and strategy across facilities. This structure enables system-wide visibility and reasoning without sacrificing performance or operational stability.

Another aspect of scalability is consistency. Digital twins created without regard to industry standards for describing architecture patterns and strongly typing the equipment, data, and related decisions become expensive and difficult to maintain. They require investment of effort that is not easily transferable to the next digital twin in the next factory, building, or other unique operating environment. Consistency from one twin to another improves reusability of the investment in modeling and instrumentation, offering scalability across an industry, rather than a single implementation.

How is security enforced in operational digital twins?

Security in operational digital twins is enforced as an architectural property rather than a post-deployment control. Data authenticity and integrity are continuously validated; controlled deployment processes govern model changes; and execution environments enforce strict isolation and authentication. Zero-trust principles extend from the cloud to edge components, ensuring that compromised subsystems cannot propagate unsafe behavior. Because digital twins influence real-world decisions, security mechanisms must be deeply embedded into data pipelines, model execution, and access control frameworks.

What organizational capabilities are required for success?

The success of a digital twin initiative depends as much on organizational readiness as on technical implementation. Teams must think in systems terms rather than isolated functions, aligning engineering, operations, IT, and data disciplines around shared objectives and decision-making authority. Governance processes are required to manage model evolution and accountability, while operational teams must be prepared to trust and act on twin-generated insights. Organizations that treat digital twins as evolving infrastructure rather than one-time deployments are far more likely to realize sustained value.

Why is graph technology so well-suited to the architecture of digital twins for manufacturing?

Any piece of manufacturing equipment you can consider, indeed, any system of any kind can be described by one or more interconnected graphs. Energy flowing through a plant and it's equipment is described by a graph made up of devices linked by wires that ensure the safe and continuous flow of power. At a larger scale, the input and output of one part of a manufacturing facility to the next can be described as material flows between units in a plant. Sometimes these flows are continuous through pipes such as in chemical refining processes, and sometimes these flows involve conveyor belts or folk lifts. Zooming out further still, the global supply chain, and even the cash flow model for a very large company can be described by a graph. In a system-of-systems, there may be thousands of individual graphs that describe different aspects of that system. The flow of data within and between systems is also a graph. Graphs not only describe the producers, consumers, processes and movements, but can also describe the constraints that must be met.

Submit a Comment