Digital twin creation has moved beyond static models or visual replicas of physical assets. In enterprise environments, it refers to establishing a continuously executing system abstraction that stays synchronized with real-time conditions and supports operational decision-making.

Having a robust and accessible digital twin requires that it is architected well and maintained consistently. The twin needs to be transparent about how system state is maintained, how dependencies are represented, and how change propagates across assets, processes, and constraints in real time.

What Digital Twin Creation Really Means at Enterprise Scale

At enterprise scale, digital twin creation is not a one-time modeling effort. It represents a shift from static representations to systems that remain synchronized with real-world conditions and can respond as situations change.

In this setting, creating a digital twin means standing up and running a continuously executing system model that maintains state, propagates changes along explicit dependencies, and supports real-time operational decisions.

Early efforts often treated “creation” as a milestone: build the model, connect some data, and declare the twin complete. Twins built in this way begin to break down once assets age, configurations drift, and operating conditions move away from the original assumptions. Without continuous execution and adaptation, the twin quickly stops matching the real system and loses value in day-to-day use.

It is more useful to imagine the digital twin as a refinery for the continuous processing of data, requiring attention to monitoring, maintenance, and repair of every element of the minute-by-minute production of accurate data, just as an actual oil refinery or energy plant requires constant vigilance.

At enterprise level, digital twin creation is a process of continuous monitoring for alignment between physical or logical operations, the data that describes them, and the logic used to interpret behavior and trigger action over time.

From Static Models to Continuously Executing Systems

Traditional digital models are descriptive. They capture system structure, parameters, and expected behavior at a specific point in time, making them useful for design validation and offline simulation. However, they typically operate outside live production conditions and remain disconnected from real-time system execution.

A continuously executing digital twin, by contrast, maintains a persistent system state and evolves as events occur. Incoming data does not simply refresh values; it triggers state transitions, propagates through explicit dependencies, and updates system behavior in context. The twin’s job is to show what is happening now and to project how changes will affect future behavior, risk, and corresponding decisions.

To meet that need, the twin needs specific architectural properties: an explicit model of system entities and dependencies, real-time event handling, and automatic propagation of changes across that graph. Without these foundations, a “digital twin” is effectively another monitoring or reporting view, not an operational system.

This conceptual shift becomes clearer when comparing traditional digital models with execution-grade digital twins.

Traditional Digital Models vs. Execution-Grade Digital Twins

An execution-grade digital twin differs from traditional models along a few practical lines:

|

Aspect |

Traditional (Static) Digital Models |

Execution-Grade Digital Twins |

|

Core purpose |

Visualization and offline analysis |

Real-time reasoning and operational execution |

|

System state |

Fixed or periodically refreshed |

Persistent, continuously evolving |

|

Data handling |

Metrics and batch updates |

Event-driven state transitions |

|

Dependencies |

Implicit or loosely defined |

Explicit, graph-based dependency modeling |

|

Execution context |

Non-production or simulated environments |

Live, production-aligned execution |

|

Change propagation |

Manual or post-analysis |

Automatic, dependency-aware propagation |

|

Decision support |

Advisory insights |

Real-time operational decision support |

|

Role in operations |

Observational artifact |

Active participant in system execution |

Put simply, a traditional model helps you reason about the system from the outside, while an execution-grade digital twin becomes an active part of how the system runs.

Why Digital Twin Creation Is an Architectural Problem, Not a Tooling Task

Many digital twin initiatives stall because creation is treated as a tooling decision rather than an architectural commitment. Teams often select modeling, visualization, or analytics platforms before defining how system state, dependencies, and execution logic will be represented, synchronized, and governed over time.

Tools can ingest data, render models, and run simulations, but they do not determine how a system behaves under live operating conditions. Decisions about data flow, state ownership, dependency representation, execution boundaries, and authority determine whether a digital twin can scale, remain trustworthy, and support real-time operational decisions. These are features of the architecture that enable the sustainable orchestration of all the moving parts.

Digital twin creation is not the act of building a digital twin application; it is the discipline of establishing an execution architecture in which such applications can exist. At enterprise scale, creation involves designing an execution environment that maintains persistent state, propagates change through explicit dependencies, and enforces decision logic under real-world constraints. Tools operate within that environment as components, not as its foundation.

Prerequisites for Creating an Execution-Grade Digital Twin

Before building models or integrating data streams, organizations must establish a clear execution foundation. At this level, digital twins are defined less by what they visualize and more by what they are expected to evaluate, influence, and act upon.

Skipping these prerequisites often produces twins that appear sophisticated but lack operational authority, trust, or decision relevance.

Defining System Boundaries and Decision Scope

A digital twin requires clearly defined system boundaries and an explicit decision scope. The first and most important step is to be clear about what the twin will be used to accomplish, the decisions to be supported, and the data required to make those decisions valid.

System boundaries determine what the twin includes and what it deliberately excludes. Boundaries that are too broad introduce unnecessary complexity, while overly narrow ones obscure critical dependencies. Decision scope defines which actions or evaluations the twin is responsible for, such as maintenance prioritization, scheduling adjustments, risk alerts, or controlled intervention.

Without a defined decision scope, the twin remains observational. With one, it becomes an operational participant.

Identifying Assets, Processes, and Dependency Domains

Assets alone do not define a system. Digital twins that model components in isolation fail to capture the interactions that drive real-world behavior.

Execution-grade twins model assets alongside processes, flows, constraints, and shared resources. Together, these form dependency domains, regions of the system where changes propagate across components and processes. Making these domains explicit is essential for understanding bottlenecks, propagation effects, and cascading failure paths.

Without explicit dependency domains, optimization and risk analysis remain local and incomplete.

Understanding Data as Events, Not Just Metrics

Most enterprise systems already generate metrics. Real-time digital twins require event signals that represent meaningful changes in system state rather than continuous streams of raw measurements.

An event-driven approach shifts interpretation from periodic sampling to reactive execution. Events drive state transitions: configuration changes, anomalies, threshold crossings, and operational signals trigger execution logic, propagate through dependency paths, and update the system’s persistent state incrementally.

Treating data as events rather than static measurements is a prerequisite for real-time execution. Without event-driven state transitions, digital twin creation remains constrained by batch analytics and delayed insight, limiting the twin’s ability to reason about and act on live system conditions.

Creating a Digital Twin Step-by-Step, Execution-First Approach

Creating a digital twin is not a linear modeling exercise or a procedural implementation checklist. It is a progressive alignment of system structure, live state, and execution logic that allows the twin to operate continuously under real operating conditions.

Each step below represents an architectural layer required for effective digital twin creation, not a sequence of tooling or integration tasks. Together, they describe how a digital twin transitions from a static representation to a continuously executing system that reasons about change as conditions evolve.

At a high level, digital twin creation and sustainable operation of the twin follows five architectural steps.

Step 1: Define Execution Objectives and Decision Scope

Digital twin creation must be anchored in the decisions the system is expected to support, not in the data it happens to expose.

Execution objectives define how the digital twin participates in operational decision-making, including prioritization, impact evaluation, risk detection, and controlled intervention. These objectives determine what the twin is allowed to evaluate, recommend, or influence.

Defining decision scope early anchors the architecture around real operational outcomes and prevents the digital twin from becoming a passive analytics or visualization layer disconnected from execution.

Step 2: Externalize System Structure and Dependencies

Digital twin creation begins by making the system structure explicit and machine interpretable.

Assets, processes, constraints, and flows must be modeled as explicit, interconnected system elements so that change propagation is predictable and inspectable. More than a blueprint, a digital system representation supports explicit and consistent encoding of how components are connected, what passes through those connections, and constraints about when and how signals or materials move between components. Structure defines how behavior emerges, not data volume or model complexity.

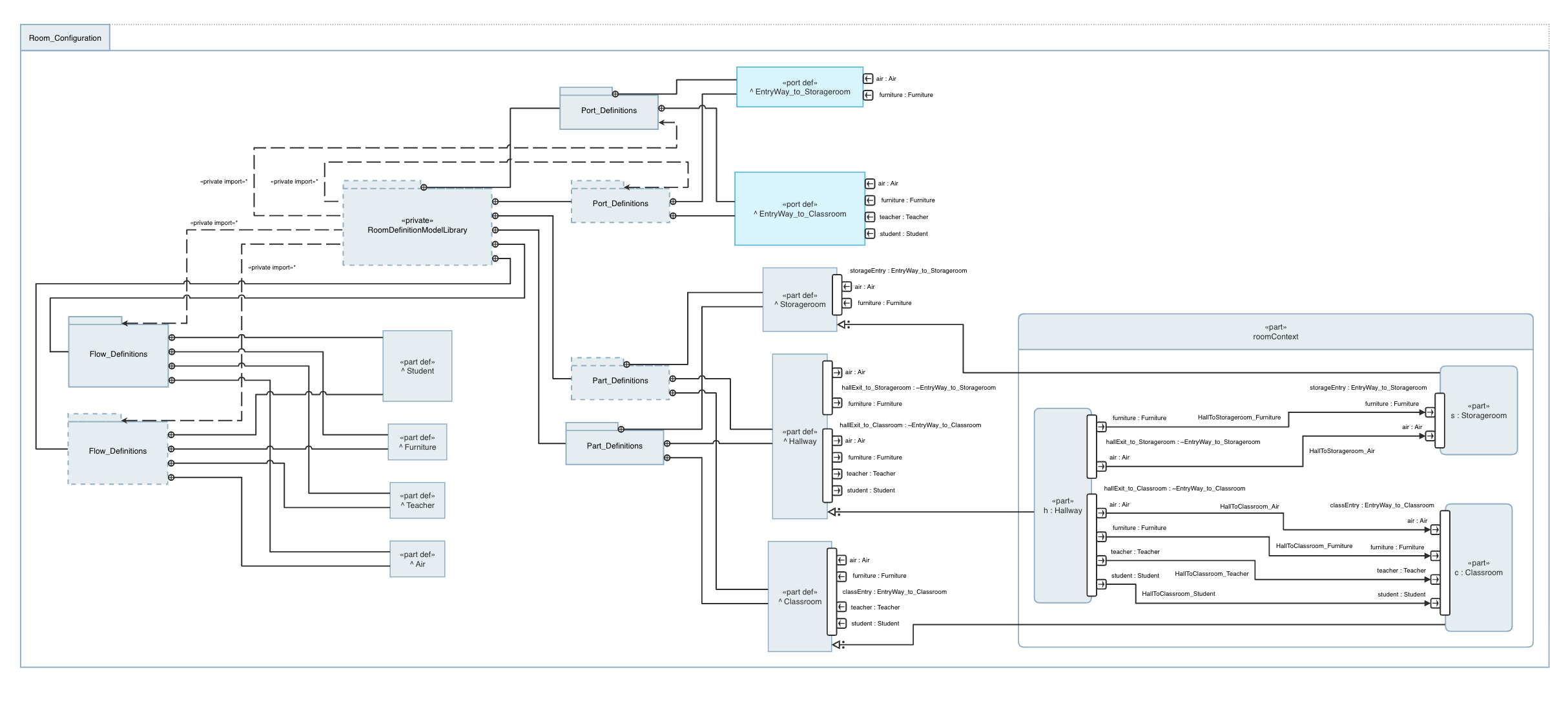

SysMLv2 can be used to model system actions and flows between components.

Graph-based dependency modeling enables direct system reasoning and prevents later execution from collapsing into isolated, data-driven components that lack system-level context.

Step 3: Build an Executable System Model with Persistent State

Once the system structure is defined, modeling shifts from description to execution.

An executable system model maintains persistent state and evaluates change incrementally as events occur. Rather than recalculating from periodic snapshots, the model evolves continuously, preserving causal consistency across dependencies.

At this stage, the digital twin transitions from a representation to a running system abstraction that can reason about current conditions and downstream impacts in real time. The models at this stage illustrate how key performance parameters stay in balance, or not, as system conditions change, so that the right interventions can be identified and executed to maintain a smoothly running system.

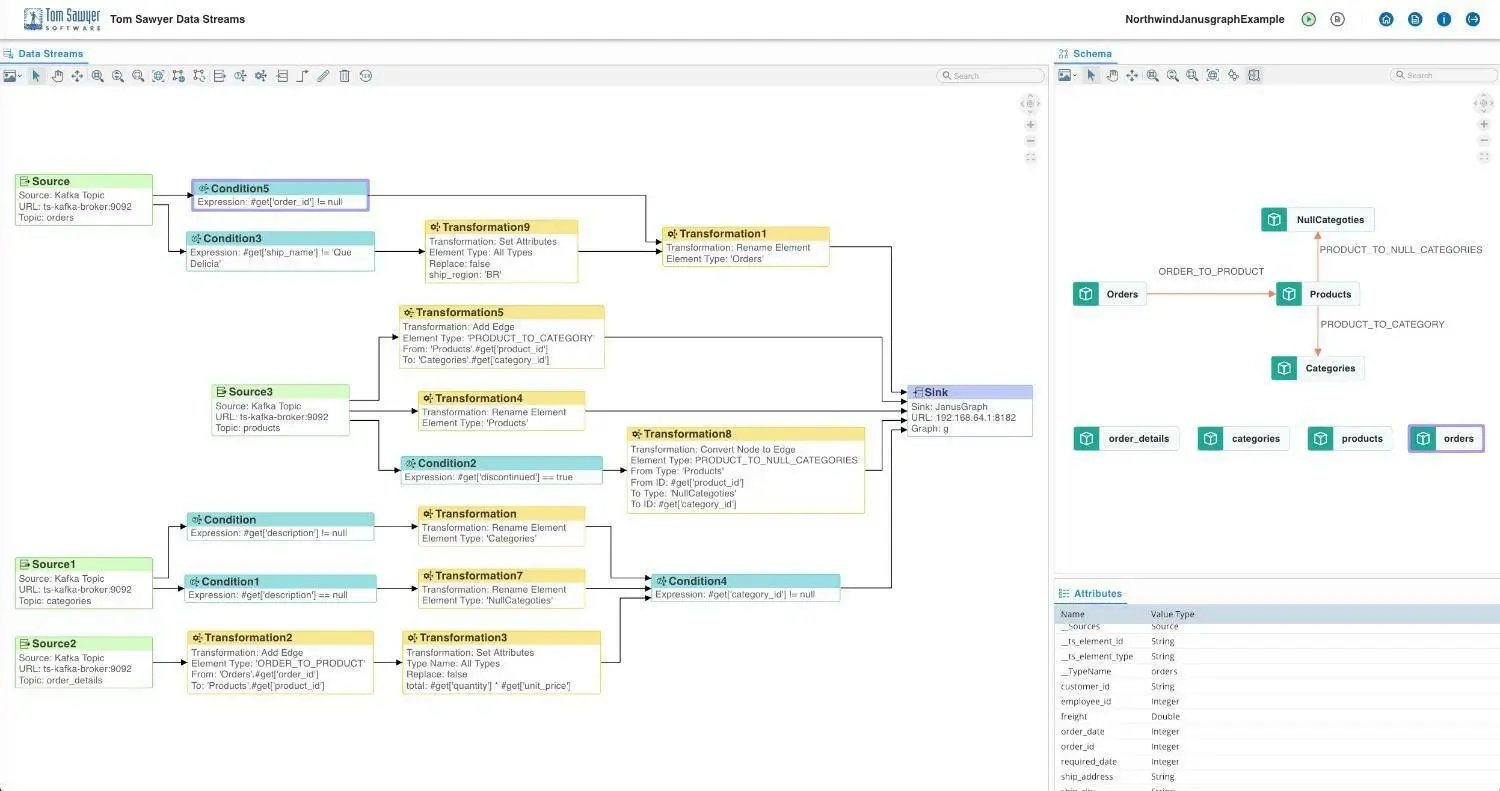

Step 4: Synchronize the Model with Real-Time Event Streams

A digital twin remains useful only if its internal state stays aligned with what is actually happening in the physical or operational system. This requires continuous synchronization with real-time event streams, not periodic refreshes or delayed snapshots.

Operational events update the model incrementally as they occur, allowing localized state changes to propagate through defined dependencies while preserving context and causal consistency. This ensures that decisions are evaluated against current system conditions rather than inferred or outdated states.

Tom Sawyer Data Streams ensures that changes to underlying models and real-time changes in operating parameters are propagated to the digital twin.

Synchronization must also account for structural change. Configuration updates, topology modifications, and resource reassignments alter system relationships and constraints. The model must absorb these changes directly so that reasoning remains valid as the system evolves. The digital twin can only represent data it has access to, and follow the explicitly modeled digital threads. Many actions that modify the physical or logical layers of the system can happen through processes that are functionally invisible to the twin unless change controls are in place to propagate those changes to the models the twin relies upon.

Keeping the physical and execution models fully consistent with the real-world system elements requires appropriate change processes, as well as constant vigilance.

Step 5: Enable Closed-Loop Reasoning and Operational Feedback

Closed-loop reasoning enables digital twin evaluations to influence operational behavior in a controlled, observable way.

Recommendations or actions, whether executed by humans or automated systems, feed back into the model as new events. Outcomes are observed, validated, and incorporated into system state, enabling continuous refinement of assumptions, dependencies, and decision logic.

This step embeds the digital twin into the operational fabric while preserving transparency, accountability, and trust in how decisions are derived.

Visualization as an Execution Interface, Not a Dashboard

In real-time digital twin architectures, visualization is not a passive output generated after analysis. It functions as an execution interface through which engineers, operators, and decision-makers observe, validate, and reason about live system behavior as it unfolds.

This distinction is critical. Modern digital twins are no longer static representations or analytical experiments. They are continuously executing system abstractions whose value depends on trust, interpretability, and real-time alignment with operational reality. Visualization is one of the primary mechanisms that sustains that alignment by making system structure, state, and behavior simultaneously visible and inspectable.

Why Traditional Dashboards Fail in Digital Twin Creation

Traditional dashboards were designed to summarize metrics, not to represent systems. They aggregate signals, display trends, and surface threshold-based alerts, an approach that works for isolated KPIs but breaks down in complex, interconnected environments.

Dashboards decouple metrics from system structure. Relationships between assets, processes, and constraints are implied rather than explicitly modeled. As a result, correlations must be inferred manually, root causes must be investigated post hoc, and the downstream impact of local changes remains opaque.

As the scope of a digital twin increases, these limitations become architectural risks. Engineers can see that something changed, but not why it matters. Operators receive alerts without understanding how issues propagate. Decision-makers act on partial context, increasing the likelihood of delayed or misaligned responses.

Successful digital twins may expose many key behavioral metrics, but also support direct visual navigation to the underlying system components for further analysis of the discrete behaviors that underly the metrics.

System-Level Visualization for Reasoning and Trust

Effective digital twins require visualization that exposes system structure, live state, and behavior simultaneously.

Rather than starting with metrics, system-level visualization begins with relationships. Assets, processes, constraints, and flows are represented within a unified system model, allowing users to understand how the system is composed and how behavior emerges as conditions change. Access to historical state data as well as extrapolations of possible future state enable users to validate their perceptions and test their assumptions.

This enables reasoning rather than observation. When a change occurs, its context is immediately visible. Dependency paths can be traced directly, and anomalies can be evaluated for root cause and downstream impact rather than treated as isolated symptoms.

For example, the visualization below supports an engineer to determine if a failure to meet the desired space temperature is related to the operation of the air handling system, or the failure of the chilled water system to deliver chilled water to the cooling coil. The relationships are clear, and the status of each element of the system is provided.

Example HVAC system diagram with simulated sensor readings, generated by Tom Sawyer Perspectives from a system graph representation.

Trust emerges when users can verify that what they see reflects how the system actually operates. Visualization becomes a validation surface for the digital twin itself. In practice, synchronization issues, modeling gaps, and data inconsistencies are often detected visually before numerical diagnostics surface them.

Role-Specific Views Without Breaking System Coherence

A common failure in digital twin initiatives is role-based fragmentation. Engineering, operations, planning, and leadership teams are often given separate dashboards, each optimized for a narrow perspective, resulting in multiple interpretations of the same system. Sometimes these views even rely on entirely independent physical or logical models of the system. These differences might be invisible to the users.

Well-architected digital twins avoid this by separating system coherence from view customization. All roles operate on a single executable system model, while visualization adjusts emphasis without altering structure, state, or behavior.

Engineers analyze dependencies and execution paths, operators monitor live state and operational impact, planners explore scenarios, and leadership evaluates risk, all from the same underlying system representation. This shared foundation preserves trust, reduces misalignment, and ensures consistent, execution-ready decision-making across roles.

Digital Twin Creation Within a Digital Engineering Environment

Within digital engineering environments, visualization makes execution-grade digital twins understandable, inspectable, and trustworthy. Engineers and operators need to examine system structure, live state, and dependency behavior in real time—without abstracting away the underlying execution logic.

Tom Sawyer Software provides an execution-aware visualization layer that renders live system structure and behavior from executable models and real-time event streams. This exposes system relationships, state transitions, and propagation paths as they occur, enabling efficient human interaction with the twin.

Tom Sawyer Perspectives delivers this capability through a unified system view of assets, processes, constraints, and flows. By making dependencies explicit and state transitions traceable, it allows users to inspect how local changes propagate across the system without fragmenting coherence across multiple dashboards or disconnected tools.

This approach keeps digital twins interpretable and trustworthy as systems evolve, aligning digital twin creation with digital engineering practices that prioritize continuous execution, traceable reasoning, and shared understanding across engineering, operations, and decision-making roles.

Common Challenges in Digital Twin Creation and How Execution Architectures Address Them

As digital twin initiatives move from conceptual pilots into operational systems, the nature of their challenges changes. Early efforts often struggle with tooling, data access, or visualization. At scale, however, the limiting factors are architectural: how systems are represented, how state is maintained under continuous change, and how decisions are governed once the twin becomes part of operational execution.

These challenges consistently emerge when digital twin creation is treated as a finite project or tooling initiative, rather than as a continuous system capability for execution, reasoning, and governance. Execution-grade architectures do not remove complexity. They make it explicit, controllable, and observable. The challenges below are not edge cases; they are structural realities of building digital twins that operate in real time across interconnected systems.

Hidden Dependencies and Emergent Behavior

One of the most persistent challenges in digital twin creation is the gap between how systems are documented and how they actually behave.

In complex operational environments, dependencies emerge through shared resources, implicit sequencing, temporal coupling, and operational constraints that evolve over time. These relationships are often undocumented, scattered across tools, or embedded in human workflows rather than system models.

When digital twins are built on flat data representations or siloed models, these dependencies remain implicit. Behavior appears emergent rather than explainable: small changes produce disproportionate effects, and failures propagate in unexpected ways.

Execution architectures address this by externalizing system structure. Dependencies are modeled explicitly as part of the executable system representation. Assets, processes, constraints, and flows exist within a shared structural context, allowing behavior to be evaluated as the result of interacting relationships rather than isolated events.

This transforms emergent behavior from a source of surprise into an object of analysis. When the system changes, engineers can trace why it changed, which relationships were involved, and how effects propagate. Digital twin creation becomes an exercise in making system behavior inspectable, not merely observable.

Maintaining Real-Time Consistency at Scale

In distributed environments, operational events arrive asynchronously, at different frequencies, and with varying reliability. Latency, partial failure, out-of-order updates, and transient data loss are normal operating conditions. As scale increases, maintaining a coherent view of system state, and providing feedback to the right system at the right frequency becomes increasingly complex.

Time is relative. What real-time means in terms of latency often depends upon context, and the digital twin may have more than one type of real-time requirement. Process control mechanisms and safety systems measure real-time in milliseconds, while business decisions might measure real-time in minutes or hours. It is important to ensure that the expectations for meeting real-time deadlines are well-understood at every level.

Many digital twin implementations relax consistency requirements. They rely on periodic reconciliation, batch updates, or delayed aggregation, accepting temporal drift between the physical system and its digital representation. While sufficient for retrospective analysis, this approach undermines the twin’s ability to support execution and time-sensitive decision-making.

Execution-focused architectures take a different approach. System state is treated as a continuously evolving property of the system model itself. Incoming events update localized portions of the model, and changes propagate incrementally along dependency paths.

This preserves causal validity as event volumes increase and system topology expands. Decisions are evaluated against the current operational state rather than a delayed approximation. The twin does not need perfect synchronization everywhere, but it must remain structurally coherent and temporally meaningful at the points where decisions are made and data is exchanged to enact those decisions.

At scale, this distinction determines whether a digital twin can safely participate in real-time operations or remains limited to advisory roles.

Security, Governance, and Decision Authority

Digital twins aggregate data across domains, expose system relationships, and increasingly influence operational decisions. This raises questions that access control alone cannot answer. Who is authorized to act on twin insights? Which decisions can be automated, and under what conditions? How are model changes validated, audited, and reversed?

In loosely coupled architectures, governance is often added after the fact. Decisions may be logged, but the reasoning behind them remains opaque. Authority boundaries are enforced externally rather than embedded in the system model.

Execution architectures integrate governance directly into the digital twin’s structure. Decision logic operates within defined authority boundaries. Model changes are versioned and inspectable. Every recommendation or automated action can be traced back to the system state, dependencies, and rules that produced it.

Security, in this context, extends beyond data protection. It includes protecting the integrity of the system's reasoning itself. Unauthorized changes, inconsistent updates, or unvalidated assumptions are surfaced through structural visibility rather than hidden behind abstraction layers.

This approach enables digital twins to operate safely in environments where accountability, compliance, and trust are non-negotiable. As digital twins gain operational influence, execution architectures ensure that influence remains controlled, transparent, and auditable.

Digital Twin Creation Across Industries

The principals of a reliable and sustainable digital twin architecture remain consistent across industries: execution-driven models synchronized with real-time state and explicit dependency representation. What varies is the operational context—latency tolerance, risk thresholds, regulatory requirements, and decision-making authority. Understanding these industry-specific constraints ensures digital twins deliver actionable intelligence without compromising architectural coherence.

Manufacturing and Industrial Systems

Manufacturing digital twins must represent tightly coupled processes where production behavior emerges from machine availability, material flow, quality conditions, and scheduling decisions interacting simultaneously.

Static dashboards cannot capture this complexity. The digital twin must model process dependencies explicitly, allowing engineers to evaluate how upstream variability and local optimizations affect system-level performance.

By synchronizing live production events with executable models, manufacturers can identify bottlenecks, constraint violations, and degradation patterns as they emerge—enabling continuous adaptation rather than periodic re-optimization.

Energy and Utilities

Energy systems operate as continuously executing networks governed by physical laws and regulatory constraints. Localized disturbances cascade across operational boundaries with minimal tolerance for delay or inconsistency.

Digital twins synchronize streaming telemetry with live system models, enabling operators to evaluate how load shifts, equipment degradation, or control actions will propagate before intervening. This preserves operational coherence as the system continuously rebalances under changing demand, weather conditions, and asset availability.

Transportation and Logistics

Transportation networks are time-sensitive and tightly coupled: delays compound rapidly across assets, routes, schedules, and external dependencies. Static routing models and batch optimization cannot respond effectively under volatile conditions.

Digital twins enable event-driven execution by representing the system as a live graph. Operators can perform dynamic rerouting, capacity reallocation, and coordinated responses in real time—improving resilience when disruptions occur and preventing cascading failures.

Government and Public Sector

Public-sector digital twins face unique demands: long infrastructure lifecycles, distributed decision-making authority, regulatory complexity, and stringent requirements for transparency and accountability.

Like commercial systems, public-sector twins must make decision logic inspectable and traceable. Assets, services, dependencies, and constraints are modeled to show stakeholders how decisions are derived, how resources are allocated, and how outcomes propagate across jurisdictional and organizational boundaries.

This visibility is critical during both planned initiatives—infrastructure modernization, service delivery optimization—and crisis response scenarios requiring rapid coordination across agencies. By maintaining real-time synchronization within a shared system context, digital twins enable coordinated action while building stakeholder trust in complex, high-stakes environments where public scrutiny and long-term accountability are paramount.

Final Thoughts: Digital Twin Creation Is a Continuous System Capability

A digital twin that remains relevant in real operations must evolve continuously alongside the system it represents.

As systems become more interconnected and software-defined, the digital twin becomes part of the execution fabric itself. Its value lies not in mirroring an initial design, but in maintaining alignment between structure, state, and reasoning as conditions change in real time.

Organizations that succeed with digital twins recognize this early. They shift focus from building a twin once to sustaining a system-level capability for governing and maintaining the twin to support ongoing reasoning, adaptation, and decision-making. The critical question is no longer what the system looks like, but how accurately and reliably the digital model can reason and respond, and grow with the system as it evolves.

Ready to Build Your Execution-Grade Digital Twin?

Tom Sawyer Perspectives enables organizations to visualize and reason about complex system dependencies in real time. Request a demo or explore our digital engineering solutions.

About the Author

Max Chagoya is Associate Product Manager at Tom Sawyer Software. He works closely with the Senior Product Manager performing competitive research and market analysis. He holds a PMP Certification and is highly experienced in leading teams, driving key organizational projects and tracking deliverables and milestones.

AI Disclosure: This article was generated with the assistance of artificial intelligence and has been reviewed and fact-checked by Caroline Scharf and Liana Kiff.

FAQs About Digital Twin Creation

How long does it take to create a digital twin?

The timeline depends more on scope and execution maturity than on system size. Initial capability can often be established within weeks by modeling a critical subsystem, integrating limited live data, and enabling basic state propagation. Continuously executing digital twins then evolve incrementally as system boundaries, dependencies, and decision authority expand.

Do digital twins require IoT sensors from day one?

No. Digital twins can begin using existing operational data such as control systems, logs, or historical telemetry. What matters most early on is structural clarity, explicit modeling of assets, processes, and dependencies. IoT sensors can be added later to enrich live execution without redefining the system model. It is critical, however, to ensure that the available data is as fresh as it needs to be, and has the required fidelity to support the use case the twin was designed to support.

What’s the difference between simulation and digital twin creation?

Simulation evaluates predefined scenarios using static models executed offline.

Digital twin creation establishes a continuously executing system abstraction that remains synchronized with real-world conditions. Instead of asking what could happen, digital twins reason about what is happening now, how effects propagate across the system, and which actions are appropriate under current conditions.

Can a digital twin exist without real-time execution?

Yes, but with limited value. Without continuous state synchronization and executable dependencies, a digital twin becomes a periodically refreshed model rather than an operational system. Execution is what enables reliable, time-sensitive decision support in complex, interconnected environments.

Submit a Comment